Your AI Agent Shouldn't Be Able to Read Your Diary

Imagine you have a personal AI assistant. Over months, you tell it everything — your health worries, your relationship friction, your career anxieties, the habits you’re trying to break. It remembers. It helps.

Now ask yourself: who else can read that?

The honest answer, with every AI memory product that exists today, is: the company running it.

The problem isn’t the AI. It’s where the memory lives.

When you use an AI agent with persistent memory — whether that’s a health companion, a journaling assistant, a personal coach, or a productivity tool — your conversations get stored somewhere. That “somewhere” is a server owned by the platform. And the platform can read it.

This is true even when there’s a privacy policy. A privacy policy is a promise. It can be broken, subpoenaed, acquired, or quietly changed. A cryptographic guarantee cannot.

I spent a few weeks building something that fixes this at the foundation:

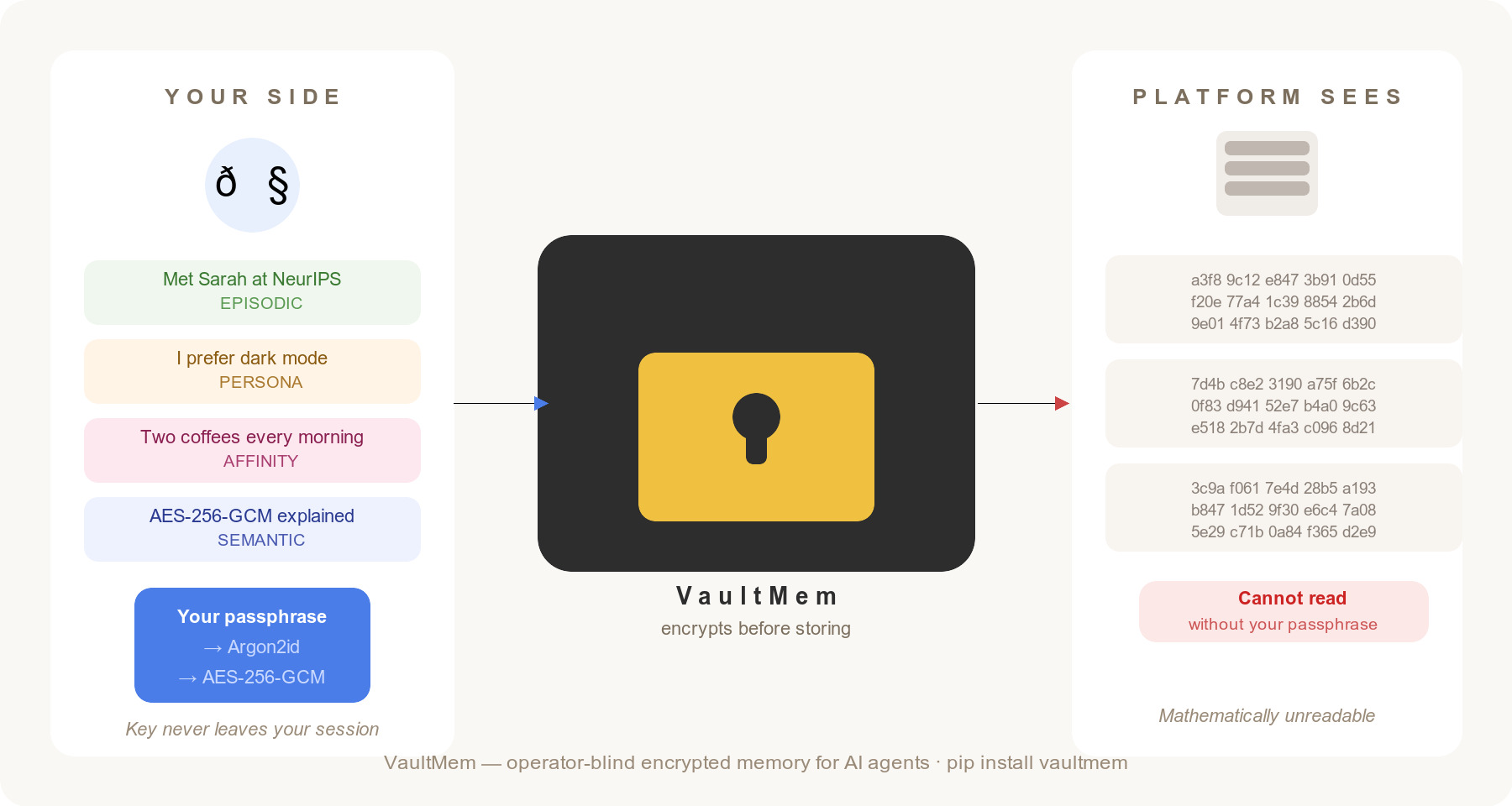

VaultMem — a Python library that gives AI agents encrypted, persistent, portable memory where the platform operator mathematically cannot read what users store.

Not “we promise not to look.” Not “we anonymize your data.” The key never leaves your session. The math makes it true regardless of what the policy says.

A real-world example: the health companion

Say you’re building a personal health AI — the kind of agent a person with a chronic condition might use to track symptoms, medications, and how they’re feeling day to day.

Without VaultMem, your architecture probably looks like this:

- User tells agent “my migraines have been worse since I started the new medication”

- Agent stores that in a database you control

- You — the developer — can query that database

- So can anyone who compromises it, or a legal subpoena, or your future acquirer

With VaultMem, it looks like this:

from vaultmem import VaultSession, LocalEmbedder

with VaultSession.open("./alice_vault", passphrase="alice_secret",

embedder=LocalEmbedder()) as s:

s.add("Migraines worse since starting new medication on March 15th")

s.add("Blood pressure 120/80 at checkup — within normal range")

s.add("Feeling anxious about the scan results, haven't slept well")

results = s.search("recent health concerns", top_k=3)

You — the developer — receive an encrypted blob you cannot open. Alice holds the key. Her memories are hers.

The same principle applies to a therapist AI, a relationship coach, a financial planner, a personal journal. Anywhere the content is deeply personal and the user has good reason not to trust a third party with it.

What it does

The library handles the full memory lifecycle — not just encryption:

- Four memory types auto-classified from content: episodic (events), semantic (facts), persona (preferences), procedural (how-tos)

- Smart retrieval that surfaces recurring habits and patterns above one-off mentions — so “my morning coffee” surfaces a pattern summary, not just the last time you mentioned coffee

- Time-aware search — ask “what was I doing last summer?” and it searches by when events actually happened, not when you typed them in

- Multi-modal — photos, voice notes, PDFs ingested as encrypted memory atoms with EXIF dates and GPS stripped from metadata

- Portable — the

.vmemformat is open and self-describing; your vault can move between apps - Production backends — S3 for storage, Postgres for indexing, HNSW for fast search at scale

Three core dependencies. No mandatory cloud service. No phone-home.

It’s early — and I’d love feedback

This is a work in progress. The library is usable and the core is solid, but I’m still building toward the vision.

Try the interactive demo (no install needed): vaultmem-demo.streamlit.app

It runs a pre-seeded vault with 36 example memories and shows you both sides — what you see (meaningful memories retrieved by your question) and what the platform sees (encrypted bytes that reveal nothing).

Install the SDK:

pip install vaultmem

Read the technical writeups:

- Part 1 — the encryption model, memory types, and retrieval

- Part 2 — multi-modal, temporal search, and production backends

The research: VaultMem implements Layer 1 of the Memory-as-Asset framework — a formal model for user-owned AI memory. The preprint is on Zenodo.

If you’re building an AI agent and want to give your users memory that’s genuinely theirs — or if you have thoughts on where this should go — I’d love to hear from you.